Chapter 3 Rasters, descriptive statistics and interpolation

3.1 Learning outcomes

By the end of this practical you should be able to:

- Load, manipulate and interpret raster layers

- Observe and critique different descriptive data manipulation methods and outputs

- Execute interpolation of points to a raster layer

- Construct a methodology for comparing raster

3.2 Homework

Outside of our schedulded sessions you should be doing around 12 hours of extra study per week. Feel free to follow your own GIS interests, but good places to start include the following:

Assignment

This week you need to assess the validity of your topic in terms of data suitability, then sketch out an introduction and literature review including academic and policy documents (or any reputable source) — it’s the same as last week!

Reading

This week:

Chapter 5 “Descriptive statistics” from Learning statistics with R: A tutorial for psychology students and other beginners by Navarro (2019)

Appendix “Interpolation in R” from Intro to GIS and Spatial Analysis by Gimond (2019).

Remember this is just a starting point, explore the reading list, practical and lecture for more ideas.

3.3 Recommended listening

Some of these practicals are long, take regular breaks and have a listen to some of our fav tunes each week.

Adam — this week, from me, it’s a history lesson. 30 Years ago, two DJs started playing tunes in the other room in Heaven, just under Charing Cross Station. The night was called Rage and the DJs were called Fabio and Grooverider. Their mix of house and sped-up breakbeats was an entirely new sound that began to be called ‘Jungle’. The 30 Years of Rage album recalls some of the early tunes that helped shape an entire genre of music. Enjoy!

3.4 Introduction

This practical is composed of three parts. To start with we’re going to load some global raster data into R. In the second part we extract data points (cities and towns) from this data and generate some descriptive statistics and histograms. In the final section we explore interpolation using point data.

3.5 Part 1 rasters

So far we’ve only really considered vector data. Within this practical we will explore some raster data sources and processing techniques. If you recall rasters are grids of cell with individual values. There are many, many possible sources to obtain raster data from as it is the data type used for the majority (basically all) of remote sensing data.

3.5.1 WorldClim data

To start with we are going to use WorldClim data — this is a dataset of free global climate layers (rasters) with a spatial resolution of between 1\(km^2\) and 240\(km^2\).

Download the data from: http://worldclim.org/version2

Select any variable you want at the 5 minute second resolution.

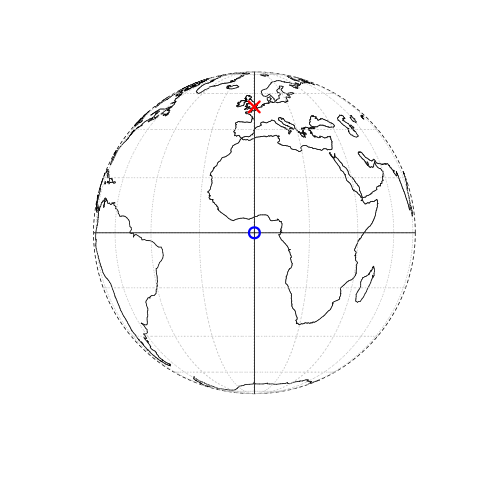

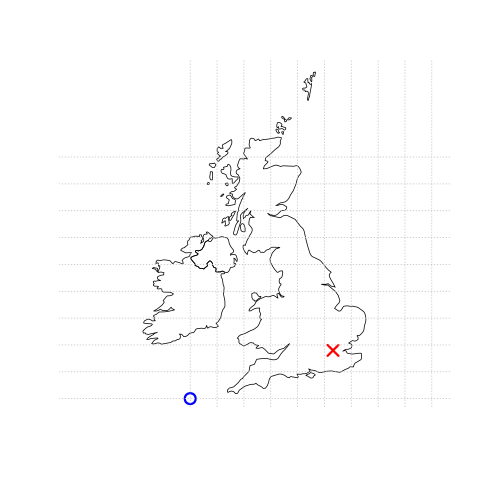

What is a 5 minute resolution i hear you ask? Well, this geographic reference system treats the globe as if it was a sphere divided into 360 equal parts called degrees. Each degree has 60 minutes and each minute has 60 seconds. Arc-seconds of latitude (horizontal lines in the globe figure below) remain almost constant whilst arc-seconds of longitude (vertical lines in the globe figure below) decrease in a trigonometric cosine-based fashion as you move towards the Earth’s poles. This causes problems as you increase or decrease latitude the longitudial lengths alter…For example at the equator (0°, such as Quito) a degree is 111.3 km whereas at 60° (such as Saint Petersburg) a degree is 55.80 km …In contrast a projected coordinate system is defined on a flat, two-dimensional plane (through projecting a spheriod onto a 2D surface) giving it constant lengths, angles and areas…

Figure 3.1: This figure is taken directly from Lovelace et al. (2019) section 2.2. Illustration of vector (point) data in which location of London (the red X) is represented with reference to an origin (the blue circle). The left plot represents a geographic CRS with an origin at 0° longitude and latitude. The right plot represents a projected CRS with an origin located in the sea west of the South West Peninsula.

If you are still a bit confused by coordiate reference systems then stop and take some time to have a look at the resources listed here. It is very important to understand projection systems.

This is the best resources i’ve come across explaining coordiate reference systems are:

https://geocompr.github.io/post/2019/crs-projections-transformations/

https://mercator.tass.com/mercator-heritage — this is the story of Mercator!

Others include:

This YouTube video that shows the differences between geographic and projected coordinate reference systems and some limitations of ArcMap…

And this great YouTube produced by SciShow shows the comporsises of different map projections

- Unzip and move the data to your project folder. Now load the data. We could do this individually….

library(raster)

jan<-raster("prac3_data/wc2.0_5m_tavg_01.tif")

# have a look at the raster layer jan

jan## class : RasterLayer

## dimensions : 2160, 4320, 9331200 (nrow, ncol, ncell)

## resolution : 0.08333333, 0.08333333 (x, y)

## extent : -180, 180, -90, 90 (xmin, xmax, ymin, ymax)

## crs : +proj=longlat +datum=WGS84 +no_defs +ellps=WGS84 +towgs84=0,0,0

## source : C:/Users/Andy/OneDrive - University College London/Teaching/CASA0005repo/prac3_data/wc2.0_5m_tavg_01.tif

## names : wc2.0_5m_tavg_01

## values : -46.697, 34.291 (min, max)- Then have a quick look at the data

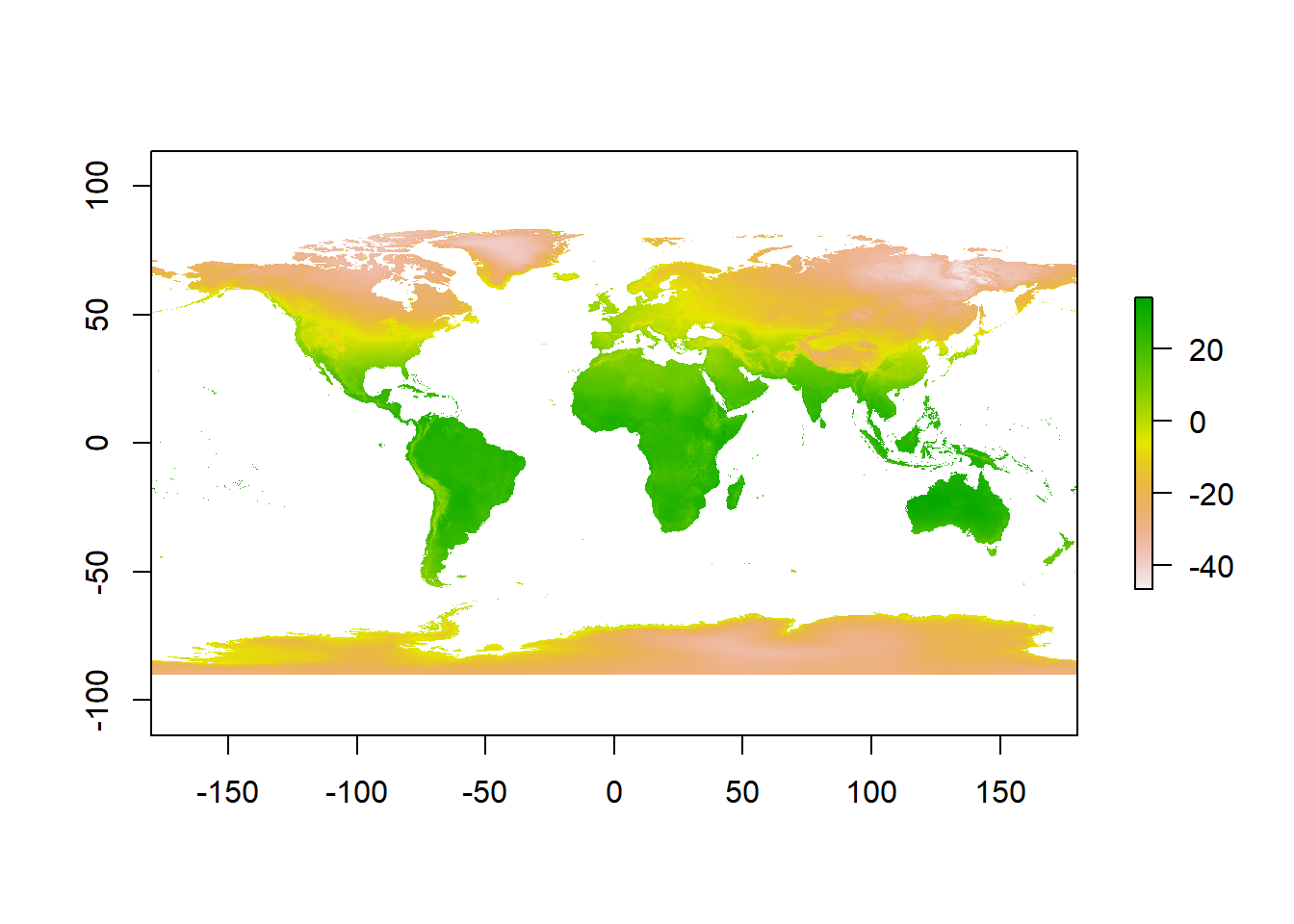

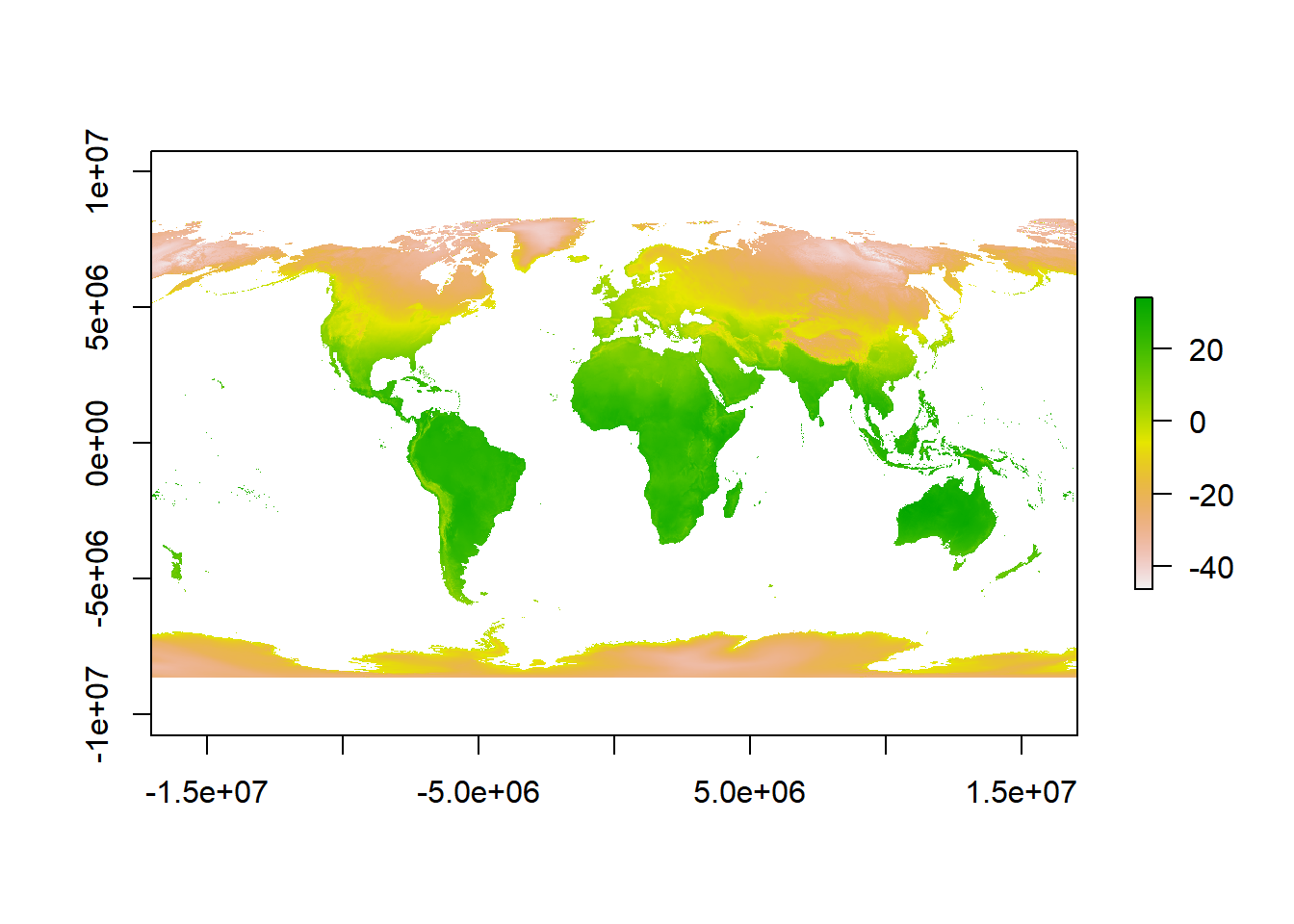

Now we can actually see some data…here is a quick example of using the Robinson projection saved to a new variable. Don’t worry about the code, just take in that it is different to the above plot

library(sf)

# set the proj 4 to a new variable

newproj<-"+proj=robin +lon_0=0 +x_0=0 +y_0=0 +ellps=WGS84 +datum=WGS84 +units=m +no_defs"

# get the jan raster and give it the new proj4

pr1 <- projectRaster(jan, crs=newproj)

plot(pr1)

- A better and more efficient way is to firstly list all the files stored within our directory

# look in our folder, find the files that end with .tif and

# provide their full filenames

listfiles <- list.files("prac3_data/", ".tif", full.names = TRUE)

#have a look at the file names

listfiles## [1] "prac3_data/wc2.0_5m_tavg_01.tif" "prac3_data/wc2.0_5m_tavg_02.tif"

## [3] "prac3_data/wc2.0_5m_tavg_03.tif" "prac3_data/wc2.0_5m_tavg_04.tif"

## [5] "prac3_data/wc2.0_5m_tavg_05.tif" "prac3_data/wc2.0_5m_tavg_06.tif"

## [7] "prac3_data/wc2.0_5m_tavg_07.tif" "prac3_data/wc2.0_5m_tavg_08.tif"

## [9] "prac3_data/wc2.0_5m_tavg_09.tif" "prac3_data/wc2.0_5m_tavg_10.tif"

## [11] "prac3_data/wc2.0_5m_tavg_11.tif" "prac3_data/wc2.0_5m_tavg_12.tif"- Then load all of the data straight into a raster stack. A raster stack is a collection of raster layers with the same spatial extent and resolution.

## class : RasterStack

## dimensions : 2160, 4320, 9331200, 12 (nrow, ncol, ncell, nlayers)

## resolution : 0.08333333, 0.08333333 (x, y)

## extent : -180, 180, -90, 90 (xmin, xmax, ymin, ymax)

## crs : +proj=longlat +datum=WGS84 +no_defs +ellps=WGS84 +towgs84=0,0,0

## names : wc2.0_5m_tavg_01, wc2.0_5m_tavg_02, wc2.0_5m_tavg_03, wc2.0_5m_tavg_04, wc2.0_5m_tavg_05, wc2.0_5m_tavg_06, wc2.0_5m_tavg_07, wc2.0_5m_tavg_08, wc2.0_5m_tavg_09, wc2.0_5m_tavg_10, wc2.0_5m_tavg_11, wc2.0_5m_tavg_12

## min values : -46.697, -44.559, -57.107, -62.996, -63.541, -63.096, -66.785, -64.600, -62.600, -54.400, -42.000, -45.340

## max values : 34.291, 33.174, 33.904, 34.629, 36.312, 38.400, 43.036, 41.073, 36.389, 33.869, 33.518, 33.667In the raster stack you’ll notice that under dimensions there are 12 layers (nlayers). The stack has loaded the 12 months of average temperature data for us in order.

- To access single layers within the stack:

## class : RasterLayer

## dimensions : 2160, 4320, 9331200 (nrow, ncol, ncell)

## resolution : 0.08333333, 0.08333333 (x, y)

## extent : -180, 180, -90, 90 (xmin, xmax, ymin, ymax)

## crs : +proj=longlat +datum=WGS84 +no_defs +ellps=WGS84 +towgs84=0,0,0

## source : C:/Users/Andy/OneDrive - University College London/Teaching/CASA0005repo/prac3_data/wc2.0_5m_tavg_01.tif

## names : wc2.0_5m_tavg_01

## values : -46.697, 34.291 (min, max)- We can also rename our layers within the stack:

month <- c("Jan", "Feb", "Mar", "Apr", "May", "Jun",

"Jul", "Aug", "Sep", "Oct", "Nov", "Dec")

names(worldclimtemp) <- month- Now to get data for just January use our new layer name

## class : RasterLayer

## dimensions : 2160, 4320, 9331200 (nrow, ncol, ncell)

## resolution : 0.08333333, 0.08333333 (x, y)

## extent : -180, 180, -90, 90 (xmin, xmax, ymin, ymax)

## crs : +proj=longlat +datum=WGS84 +no_defs +ellps=WGS84 +towgs84=0,0,0

## source : C:/Users/Andy/OneDrive - University College London/Teaching/CASA0005repo/prac3_data/wc2.0_5m_tavg_01.tif

## names : Jan

## values : -46.697, 34.291 (min, max)3.5.2 Location data from a raster

- Using a raster stack we can extract data with a single command!! For example let’s make a dataframe of some sample sites — Australian cities/towns.

site <- c("Brisbane", "Melbourne", "Perth", "Sydney", "Broome", "Darwin", "Orange",

"Bunbury", "Cairns", "Adelaide", "Gold Coast", "Canberra", "Newcastle",

"Wollongong", "Logan City" )

lon <- c(153.03, 144.96, 115.86, 151.21, 122.23, 130.84, 149.10, 115.64, 145.77,

138.6, 153.43, 149.13, 151.78, 150.89, 153.12)

lat <- c(-27.47, -37.91, -31.95, -33.87, 17.96, -12.46, -33.28, -33.33, -16.92,

-34.93, -28, -35.28, -32.93, -34.42, -27.64)

#Put all of this inforamtion into one list

samples <- data.frame(site, lon, lat, row.names="site")

# Extract the data from the Rasterstack for all points

AUcitytemp<- raster::extract(worldclimtemp, samples)- Add the city names to the rows of AUcitytemp

3.6 Part 2 descriptive statistics

Descriptive (or summary) statistics provide a summary of our data, often forming the base of quantitiatve analysis leading to inferential statistics which we use to make infereces about our data (e.g. judegements of the probability that the observed difference between two datasets is not by chance)

3.6.1 Data preparation

- Let’s take Perth as an example. We can subset our data either using the row name:

- Or the row location:

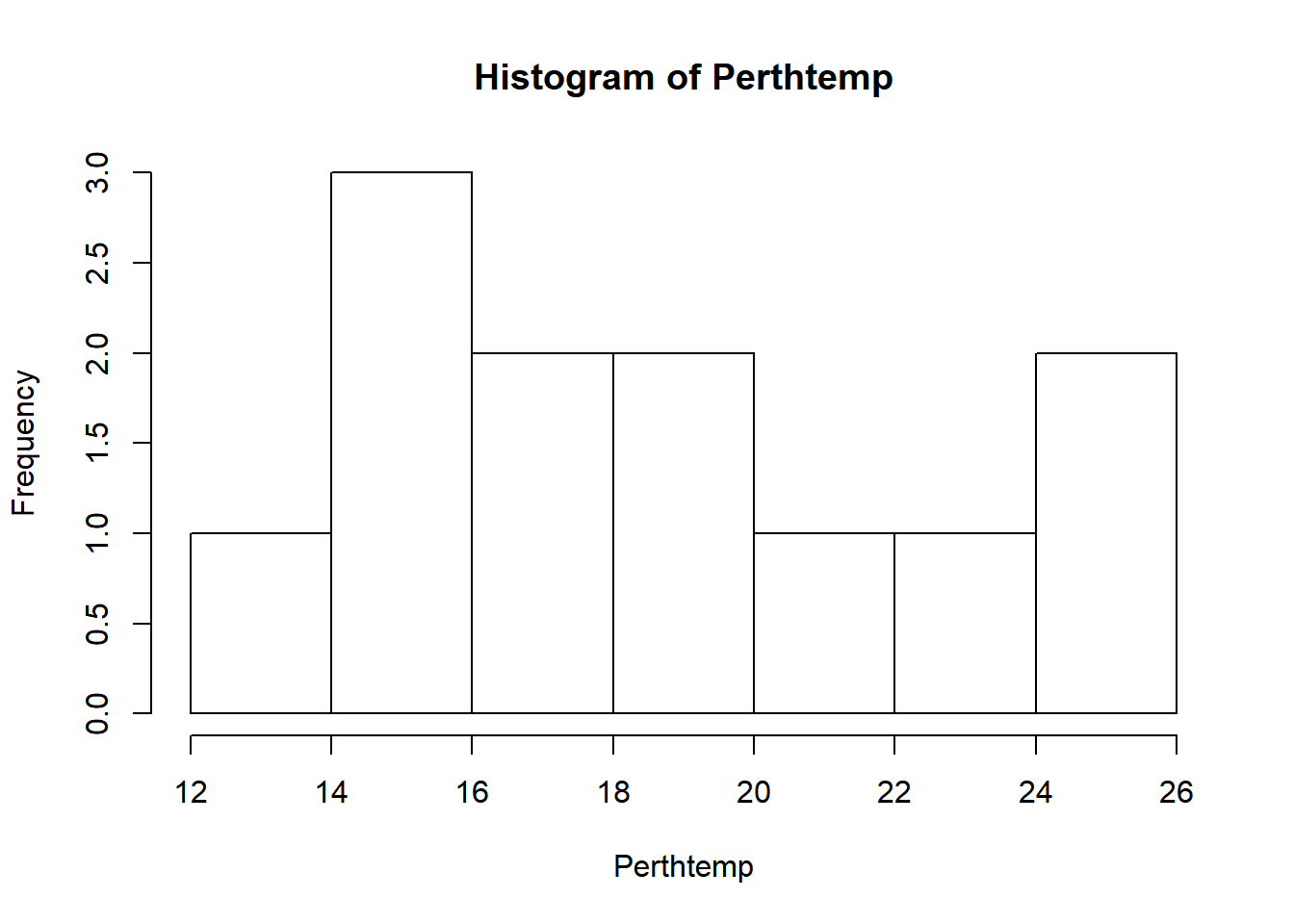

3.6.2 Histogram

A histogram lets us see the frequency of distribution of our data.

- Make a histogram of Perth’s temperature

Remember what we’re looking at here. The x axis is the temperature and the y is the frequency of occurrence.

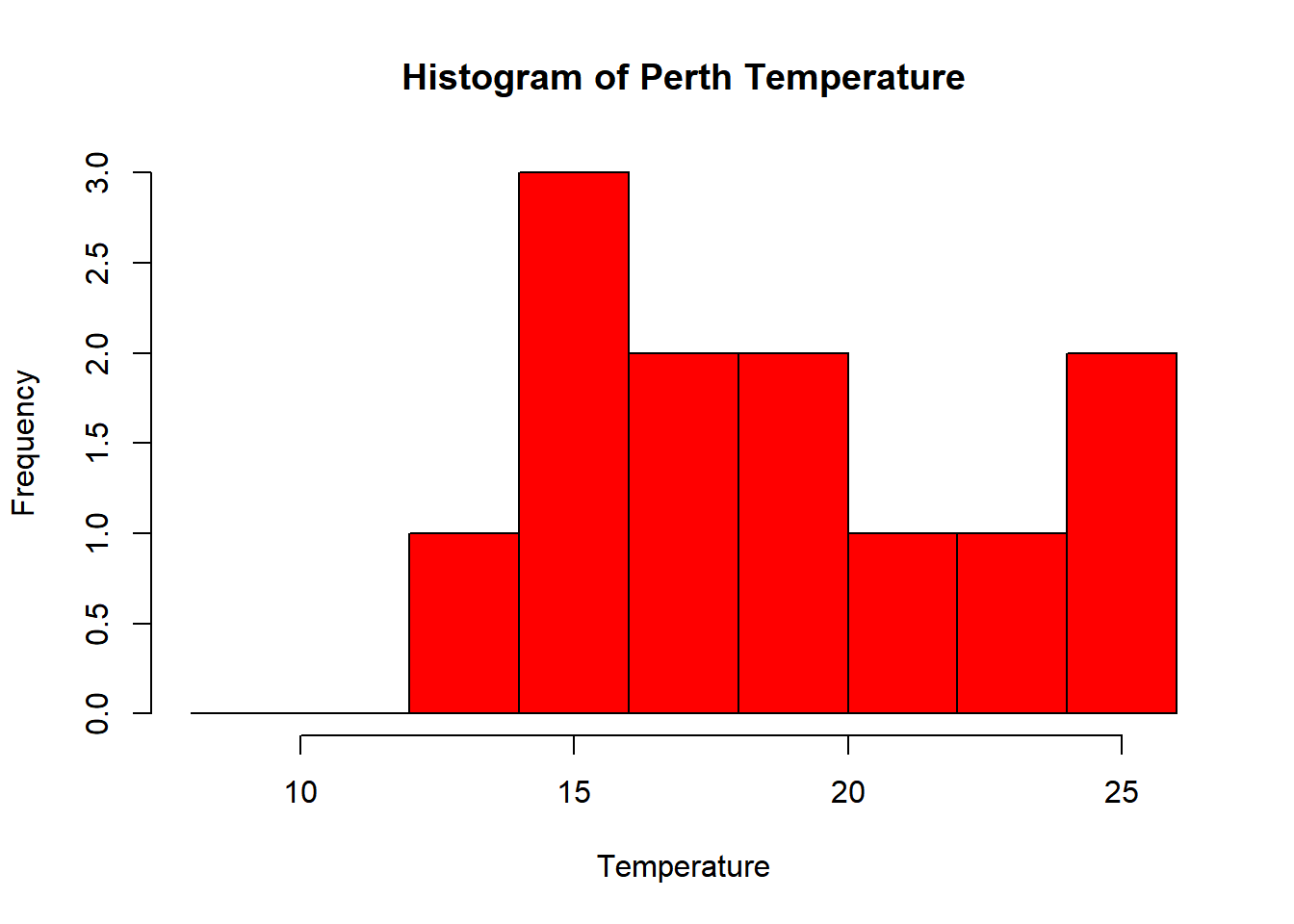

- That’s a pretty simple histogram, let’s improve the aesthetics a bit.

#define where you want the breaks in the historgram

userbreak<-c(8,10,12,14,16,18,20,22,24,26)

hist(Perthtemp,

breaks=userbreak,

col="red",

main="Histogram of Perth Temperature",

xlab="Temperature",

ylab="Frequency")

- Check out the histogram information R generated

## $breaks

## [1] 12 14 16 18 20 22 24 26

##

## $counts

## [1] 1 3 2 2 1 1 2

##

## $density

## [1] 0.04166667 0.12500000 0.08333333 0.08333333 0.04166667 0.04166667 0.08333333

##

## $mids

## [1] 13 15 17 19 21 23 25

##

## $xname

## [1] "Perthtemp"

##

## $equidist

## [1] TRUE

##

## attr(,"class")

## [1] "histogram"Here we have:

- breaks — the cut off points for the bins (or bars), we just specified these

- counts — the number of cells in each bin

- midpoints — the middle value for each bin

- density — the density of data per bin

3.6.3 Using more data

This was still a rather basic histogram, what if we wanted to see the distribution of temperatures for the whole of Australia in Jan (from averaged WorldClim data) as opposed to just our point for Perth.

First, we need to source and load a vector of Australia. Go to: https://gadm.org/download_country_v3.html and download the GeoPackage

Check what layers are within a GeoPackage using:

## Driver: GPKG

## Available layers:

## layer_name geometry_type features fields

## 1 gadm36_AUS_0 Multi Polygon 1 2

## 2 gadm36_AUS_1 Multi Polygon 11 10

## 3 gadm36_AUS_2 Multi Polygon 569 13- Then read in the GeoPackage layer for the whole of Australia

## Reading layer `gadm36_AUS_0' from data source `C:\Users\Andy\OneDrive - University College London\Teaching\CASA0005repo\prac3_data\gadm36_AUS.gpkg' using driver `GPKG'

## Simple feature collection with 1 feature and 2 fields

## geometry type: MULTIPOLYGON

## dimension: XY

## bbox: xmin: 112.9211 ymin: -55.11694 xmax: 159.1092 ymax: -9.142176

## epsg (SRID): 4326

## proj4string: +proj=longlat +datum=WGS84 +no_defsCheck the layer by plotting the geometry…we could do this through…

But as the .shp is quite complex (i.e. lots of points) we can simplify it first with the rmapshaper package — install that now..if it doesn’t load (or crashes your PC) this isn’t an issue. It’s just good practice that when you load data into R you check to see what it looks like…

#load the rmapshaper package

library(rmapshaper)

#simplify the shapefile

#keep specifies the % of points

#to keep

ms_simplify(Ausoutline, keep=0.05)## Simple feature collection with 1 feature and 2 fields

## geometry type: MULTIPOLYGON

## dimension: XY

## bbox: xmin: 112.9211 ymin: -54.7775 xmax: 159.1054 ymax: -9.221099

## epsg (SRID): 4326

## proj4string: +proj=longlat +datum=WGS84 +no_defs

## GID_0 NAME_0 geom

## 1 AUS Australia MULTIPOLYGON (((158.8517 -5...

This should load quicker, but for ‘publication’ or ‘best’ analysis (i.e. not just demonstrating or testing) i’d recommend using the real file to ensure you don’t simply a potentially important variable.

Check out this vignette for more information about rmapshaper

- Next, set our map extent to the outline of Australia then crop our WorldClim dataset to it

## class : Extent

## xmin : 112.9211

## xmax : 159.1092

## ymin : -55.11694

## ymax : -9.142176# now crop our temp data to the extent

Austemp <- crop(worldclimtemp, Ausoutline)

# plot the output

Austemp## class : RasterBrick

## dimensions : 551, 554, 305254, 12 (nrow, ncol, ncell, nlayers)

## resolution : 0.08333333, 0.08333333 (x, y)

## extent : 112.9167, 159.0833, -55.08333, -9.166667 (xmin, xmax, ymin, ymax)

## crs : +proj=longlat +datum=WGS84 +no_defs +ellps=WGS84 +towgs84=0,0,0

## source : memory

## names : Jan, Feb, Mar, Apr, May, Jun, Jul, Aug, Sep, Oct, Nov, Dec

## min values : 6.360000, 6.287500, 5.554286, 4.051429, 2.542857, -0.171000, -1.945000, -1.677000, 0.677000, 3.054286, 3.842424, 5.433333

## max values : 34.29100, 33.17400, 32.39700, 30.07200, 28.50000, 27.40000, 26.90000, 27.20000, 29.31209, 31.72000, 33.51800, 33.66700You’ll notice that whilst we have the whole of Australia the raster hasn’t been perfectly clipped to the exact outline….the extent just specifies an extent box that will cover the whole of the shape.

- If want to just get raster data within the outline of the shape:

You could also run this using the original worldclimtemp raster, however, it may take some time. I’d recommend cropping to the extent first.

Both our Austemp and exactAus are raster bricks. A brick is similar to a stack except it is now stored as one file instead of a collection.

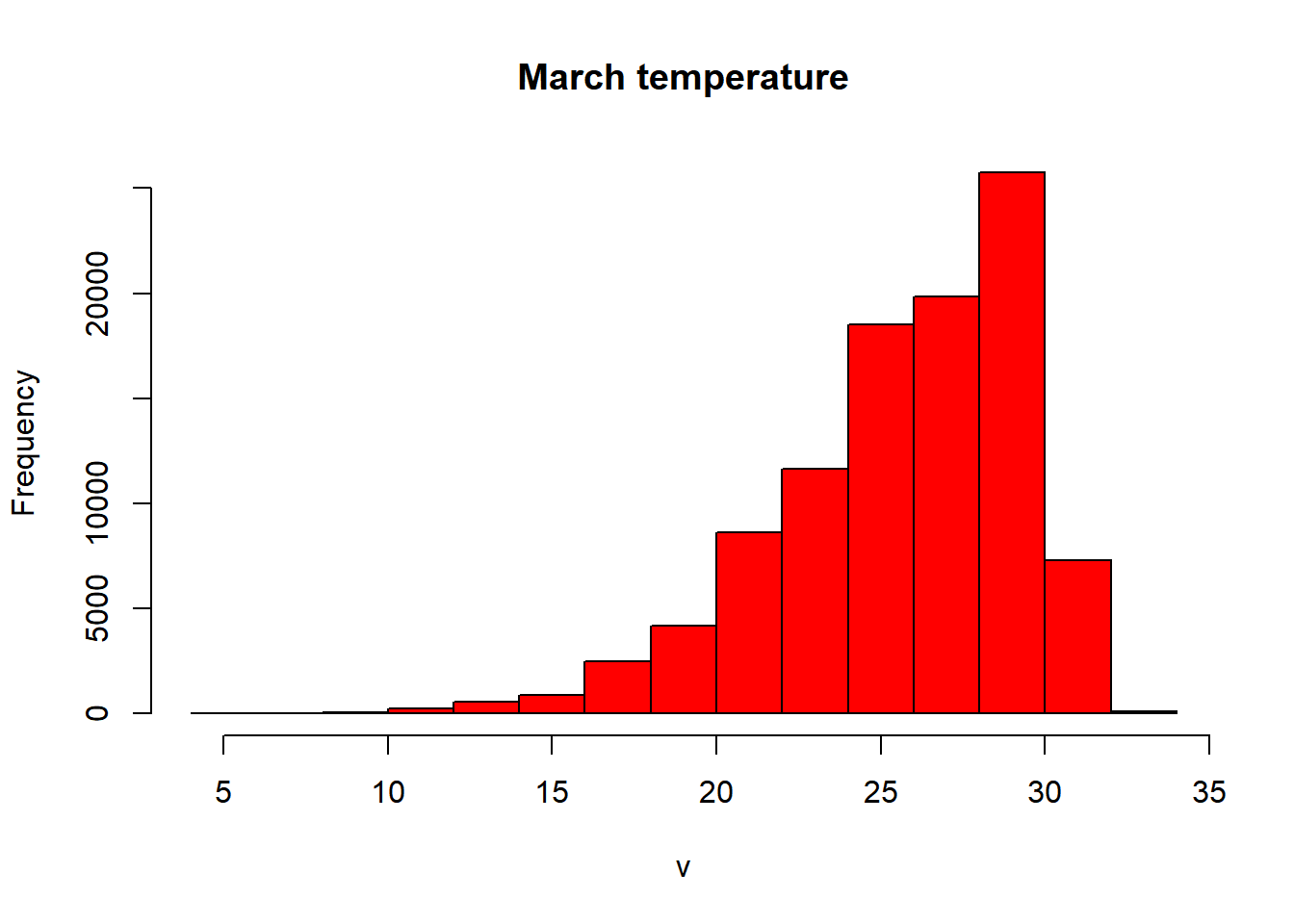

- Let’s re-compute our histogram for Australia in March. We could just use hist like we have done before

However we have a bit more control with ggplot()…

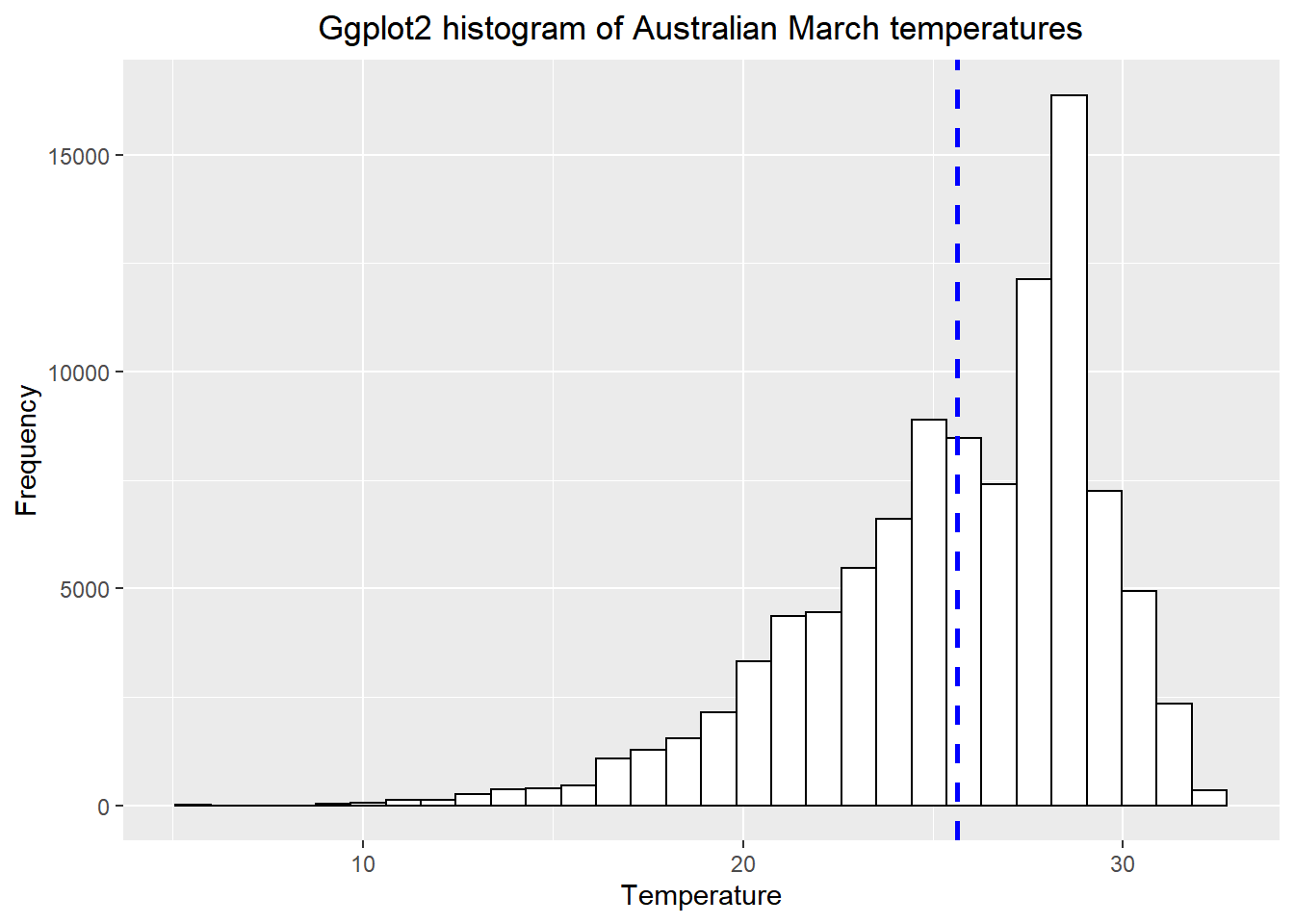

3.6.4 Histogram with ggplot

- We need to make our raster into a data.frame to be compatible with

ggplot2

library(ggplot2)

# set up the basic histogram

gghist <- ggplot(alldf,

aes(x=Mar)) +

geom_histogram(color="black",

fill="white")+

labs(title="Ggplot2 histogram of Australian March temperatures",

x="Temperature",

y="Frequency")

# add a vertical line to the hisogram showing mean tempearture

gghist + geom_vline(aes(xintercept=mean(Mar,

na.rm=TRUE)),

color="blue",

linetype="dashed",

size=1)+

theme(plot.title = element_text(hjust = 0.5))

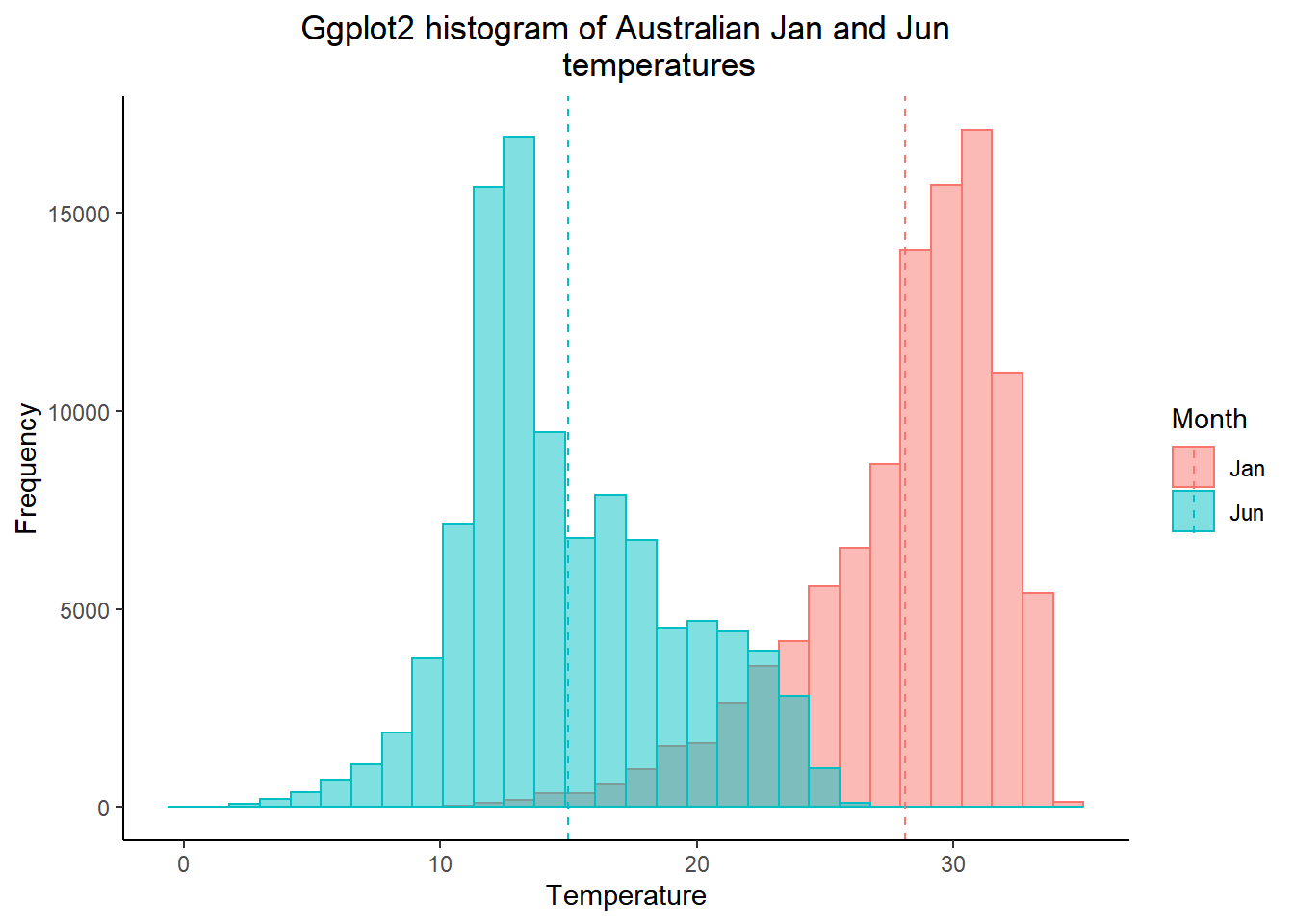

How about plotting multiple months of temperature data on the same histogram

- As we did in practical 2, we need to put our variaible (months) into a one coloumn using

melt. We will do this based on the names of our coloumns in alldf…

We could also use the functions we learnt about in Tidying data

- Then subset the data, selecting two months

- Get the mean for each month we selected

library(dplyr)

meantwomonths <- ddply(twomonths,

"variable",

summarise,

grp.mean=mean(value, na.rm=TRUE))

colnames(meantwomonths)[colnames(meantwomonths)=="variable"] <- "Month"

head(meantwomonths)## Month grp.mean

## 1 Jan 28.11321

## 2 Jun 14.96415- Select the colour and fill based on the variable (which is our month). The intercept is the mean we just calculated, with the lines also based on the coloumn variable.

#rename the coloumn from variable to month so it looks

# nice in the legend of the histogram

colnames(twomonths)[colnames(twomonths)=="variable"] <- "Month"

ggplot(twomonths, aes(x=value, color=Month, fill=Month)) +

geom_histogram(position="identity", alpha=0.5)+

geom_vline(data=meantwomonths,

aes(xintercept=grp.mean,

color=Month),

linetype="dashed")+

labs(title="Ggplot2 histogram of Australian Jan and Jun

temperatures",

x="Temperature",

y="Frequency")+

theme_classic()+

theme(plot.title = element_text(hjust = 0.5))

Note how i adjusted the title after i selected the theme, if i had done this before the theme defaults would have overwritten my command.

- Have you been getting an annoying error message about bin size and non-finate values? Me too!…Bin size defaults to 30 in

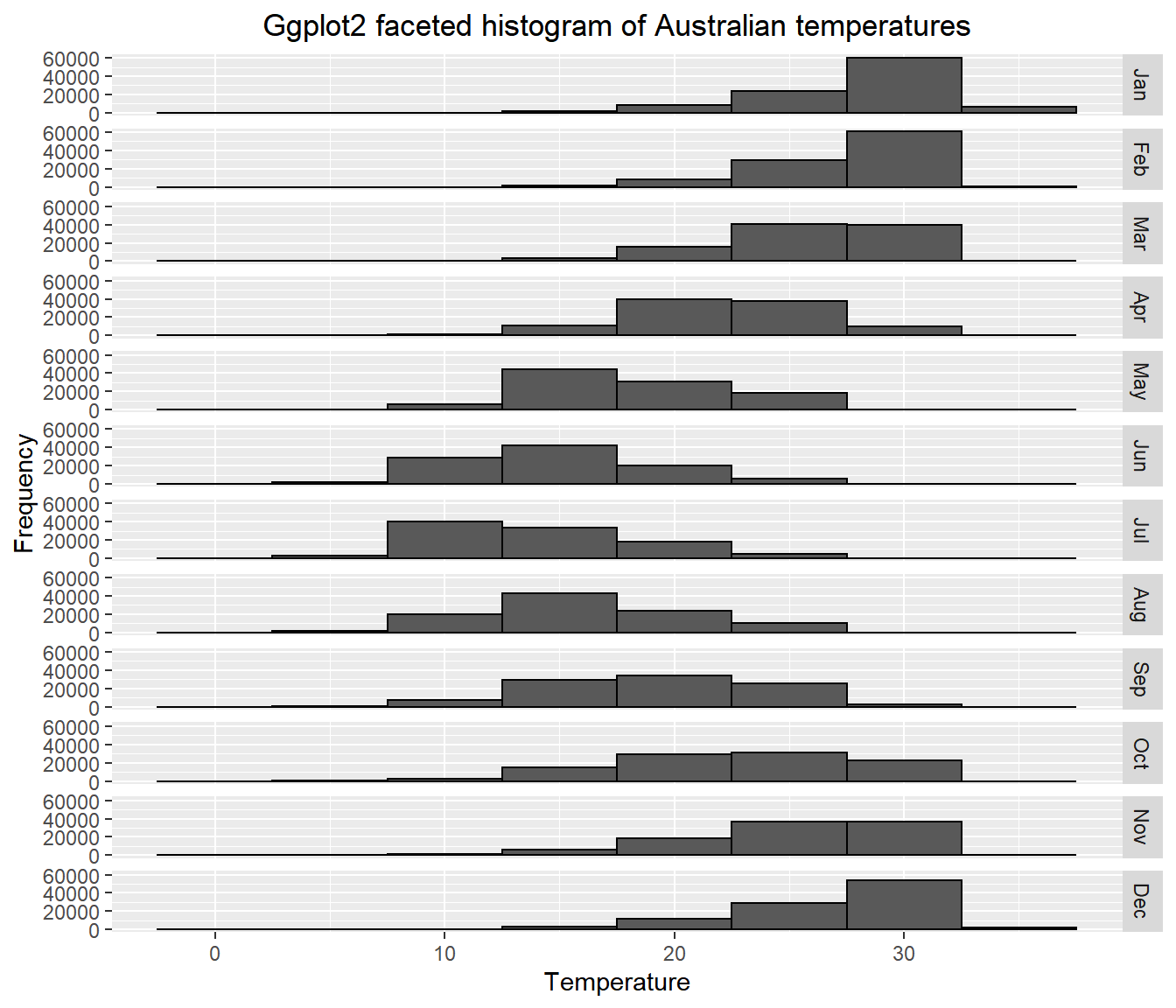

ggplot2and the non-finate values is referring to lots of NAs (no data) that we have in our dataset. In the code below i’ve selected a bin width of 5 and removed all the NAs withcomplete.casesand produced a faceted plot…

# Remove all NAs

data_complete_cases <- squishdata[complete.cases(squishdata), ]

# How many rows are left

dim(data_complete_cases)## [1] 1201812 2## [1] 3663048 2# Plot faceted histogram

ggplot(data_complete_cases, aes(x=value, na.rm=TRUE, color=variable))+

geom_histogram(color="black", binwidth = 5)+

labs(title="Ggplot2 faceted histogram of Australian temperatures",

x="Temperature",

y="Frequency")+

facet_grid(variable ~ .)+

theme(plot.title = element_text(hjust = 0.5))

Does this seem right to you? Well…yes. It shows that the distribution of temperature is higher (or warmer) in the Australian summer (Dec-Feb) than the rest of the year, which makes perfect sense.

How about an interactive histogram using plotly…

- See if you can understand what is going on in the code below. Run each line seperately.

library(plotly)

# split the data for plotly based on month

jan<-subset(squishdata, variable=="Jan", na.rm=TRUE)

jun<-subset(squishdata, variable=="Jun", na.rm=TRUE)

# give axis titles

x <- list (title = "Temperature")

y <- list (title = "Frequency")

# set the bin width

xbinsno<-list(start=0, end=40, size = 2.5)

# plot the histogram calling all the variables we just set

ihist<-plot_ly(alpha = 0.6) %>%

add_histogram(x = jan$value,

xbins=xbinsno, name="January") %>%

add_histogram(x = jun$value,

xbins=xbinsno, name="June") %>%

layout(barmode = "overlay", xaxis=x, yaxis=y)

ihistOk so enough with the histograms…the point is to think about how to best display your data both effectively and efficiently.

- Let’s change the pace a bit and do a quickfire of other descrptive statistics you might want to use…

library(dplyr)

# mean per month

meanofall <- ddply(squishdata, "variable", summarise, grp.mean=mean(value, na.rm=TRUE))

# print the top 1

head(meanofall, n=1)## variable grp.mean

## 1 Jan 28.11321# standard deviation per month

sdofall <- ddply(squishdata, "variable", summarise, grp.sd=sd(value, na.rm=TRUE))

# maximum per month

maxofall <- ddply(squishdata, "variable", summarise, grp.mx=max(value, na.rm=TRUE))

# minimum per month

minofall <- ddply(squishdata, "variable", summarise, grp.min=min(value, na.rm=TRUE))

# Interquartlie range per month

IQRofall <- ddply(squishdata, "variable", summarise, grp.IQR=IQR(value, na.rm=TRUE))

# perhaps you want to store multiple outputs in one list..

lotsofthem <- ddply(squishdata, "variable",

summarise,grp.min=min(value,na.rm=TRUE),

grp.mx=max(value, na.rm=TRUE))

# or you want to know the mean (or some other stat) for the whole year as opposed to each month...

meanwholeyear=mean(squishdata$value, na.rm=TRUE)3.7 Part 3 interpolation

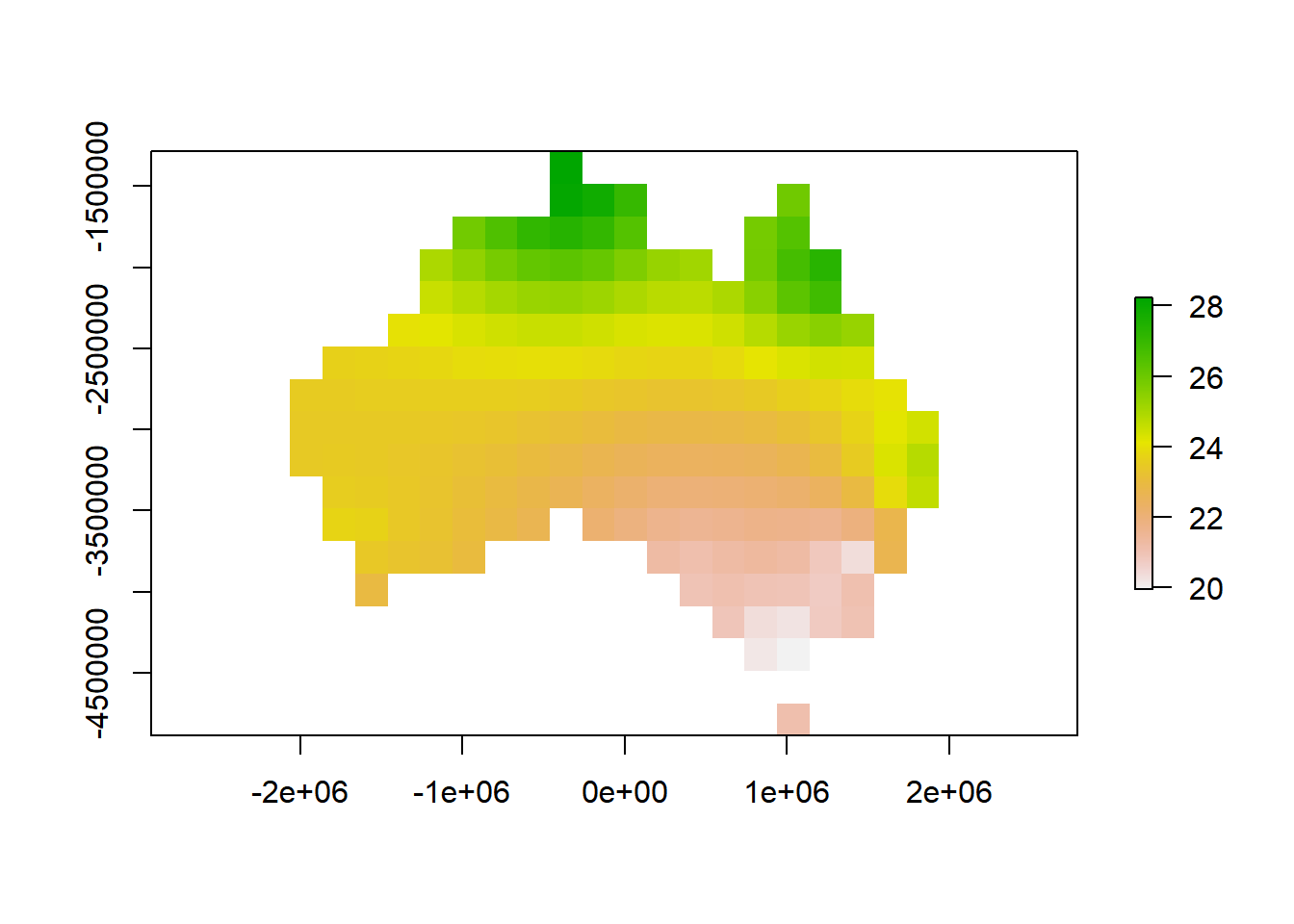

What if you had a selection of points over a spatial area but wanted to generate a complete raster. For this example, we will take our sample points (Australian cities) and estimate data between them using interpolation.

- If you look at our samples and AUcitytemp data the lat and lon is only in the former. We need to have this with our temperature data so let’s combine it using

cbind

- Now we need to tell R that our points are spatial points

library(dplyr)

# convert samples temp to a data frame

samplestemp<-as.data.frame(samplestemp)

spatialpt <- SpatialPoints(samplestemp[,c('lon','lat')], proj4string = crs(worldclimtemp))

spatialpt <- SpatialPointsDataFrame(spatialpt, samplestemp)- You’ll notice that here i’ve just nicked the CRS from our worldclimtemp. In generally it’s good practice to avoid using static or hard coding references, by that i mean if we added another coloumn to our samplestemp data (or manipulated somehow) then using this…don’t run this….

spatialpt <- SpatialPoints(samplestemp[13:14],

proj4string=CRS("+proj=longlat +datum=WGS84 +no_defs +ellps=WGS84 +towgs84=0,0,0 "))This would give us a headache as coloumns 13 and 14 might no longer be longitude and latitude.

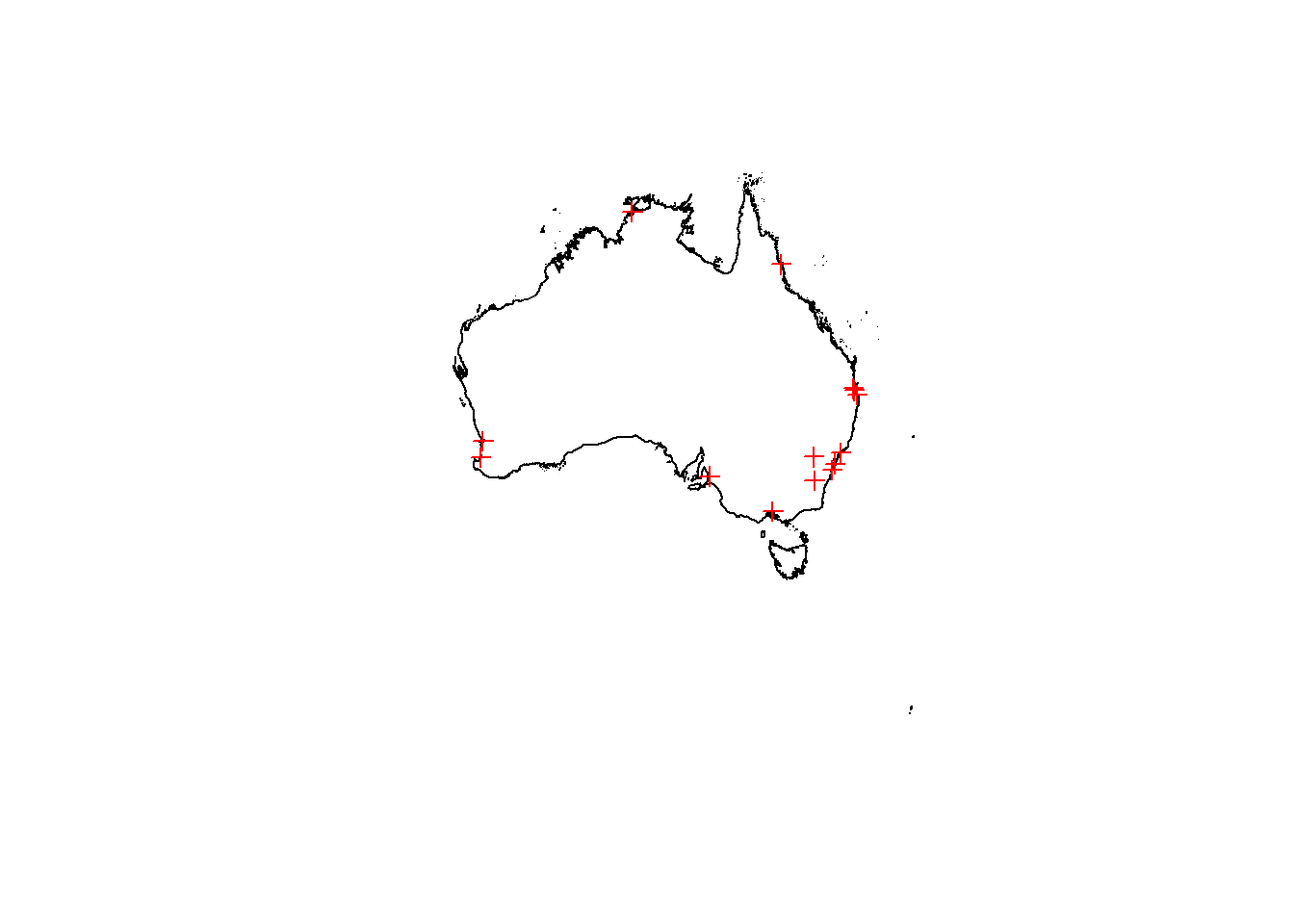

- Right…plot the Australian geometry outline then add our spatial data points ontop…

- Let’s interpolate using Inverse Distance Weighting, or IDW as it’s more commonly known. IDW is a deterministic method for multivaraite interpolation that estaimtes values for a surface using a weighted average of the provided data. The values closer to the point being predicted have more weight than those further away. The rate at which distance from the provided point imapcts the predcted point is controlled by the power of

p. Ifp=0then there is no decrease with distance.

For more infomation see: https://pro.arcgis.com/en/pro-app/help/analysis/geostatistical-analyst/how-inverse-distance-weighted-interpolation-works.htm

- To get a meaningful result we could run some more calucaltions on let’s project our data to GDA94 (EPSG:3112)

spatialpt <- st_as_sf(spatialpt)

spatialpt <- st_transform(spatialpt, 3112)

spatialpt<-as(spatialpt, 'Spatial')

Ausoutline<-st_transform(Ausoutline, 3112)

Ausoutline2<-as(Ausoutline, 'Spatial')I’ve been a bit lazy here, if you recall from the previous practical session there are different ways to reproject data based if you have an SP or SF object. SP objects require you to specify the entire CRS string, so i just convered it to SF, used the ESPG code and converted it back to SP. The main reason for doing this is that i made a grid to store my interpolation and having a remote sensing background i wanted to specify the pixel size. The equivalent function if SF won’t let you specify pixel size or there is no easy and straightforward way to do it (at least to my knowledge).

- Next, create an empty grid where cellsize is the spatial resolution, cellsize will overwrite the number of pixels we specified (n). Here as we’ve used a projected CRS i’ve put a high cellsize (in metres) so 200km by 200km cells. You can use a smaller number if you wish but it will take much longer to process.

emptygrd <- as.data.frame(spsample(Ausoutline2, n=1000, type="regular", cellsize=200000))

names(emptygrd) <- c("X", "Y")

coordinates(emptygrd) <- c("X", "Y")

gridded(emptygrd) <- TRUE # Create SpatialPixel object

fullgrid(emptygrd) <- TRUE # Create SpatialGrid object

# Add the projection to the grid

proj4string(emptygrd) <- proj4string(spatialpt)

library(gstat)

# Interpolate the grid cells using a power value of 2

interpolate <- gstat::idw(Jan ~ 1, spatialpt, newdata=emptygrd, idp=2.0)## [inverse distance weighted interpolation]# Convert output to raster object

ras <- raster(interpolate)

# Clip the raster to Australia outline

rasmask <- mask(ras, Ausoutline)

# Plot the raster

plot(rasmask)

IDW is just one method for interpolating data, there are many more, if you are interested check out: https://mgimond.github.io/Spatial/interpolation-in-r.html

3.8 Auto data download

In this practical I’ve shown you how to source the data online, download it and load it into R. However for both WorldClim and GADM we can do this straight from R using the getData function….i’m sorry for making you do it the long way, but it’s good to do things manually to see how they work.

WARNING, this may take some time. I’ve changed the resolution to 10 degrees, but I’d advise not running this in the practical session.

#WorldClim data has a scale factor of 10 when using getData!

tmean_auto <- getData("worldclim", res=10, var="tmean")

tmean_auto <- tmean_auto/10Now for GADM

Much more convenient right?

3.9 Advanced analysis

Are you already comptent with raster analysis and R, then have a go at completing this task in the practical session.

Within the practical we’ve loaded one and created one raster layer. Undertake some comparative analysis to detemrine spatial (and temporal if appropraite) differences between the rasters here and any others you may wish to create (e.g. from other interpolation methods). Try to identify where the varaitions are and explain why they are occuring.

You could assume that one raster is the ‘gold standard’ meaning it’s beleived to be fully correct and compare others to it.

… Or you could go further than this and obtain weather station temperature data (or any other variable) for multiple sites, interpolate based on 50% of the sites and use the remaining sites to assess the accuracy of your selected method / the WorldClim data.

Free weather station data can be found here: https://rp5.ru/Weather_in_the_world

Have a go and discuss with your fellow students / members of the teaching team during the practical sessions or on slack.

3.10 Feedback

Was anything that we explained unclear this week or was something really clear…let us know using the feedback form. It’s anonymous and we’ll use the responses to clear any issues up in the future / adapt the material.